A Brief History of the Multi-Core Desktop CPU

It's hard to overemphasize how far computers accept come and how they accept transformed just about every aspect of our lives. From rudimentary devices similar toasters to cutting-edge devices like spacecrafts, yous'll exist hard pressed not to find these devices making utilize of some form of calculating adequacy.

At the heart of every ane of these devices is some form of CPU, responsible for executing programme instructions as well as coordinating all the other parts that make the computer tick. For an in-depth explainer on what goes into CPU design and how a processor works internally, cheque out this amazing serial here on TechSpot. For this article, however, the focus is on a unmarried aspect of CPU blueprint: the multi-core architecture and how it's driving functioning of modern CPUs.

Unless you're using a computer from two decades agone, chances are you take a multi-cadre CPU in your system and this isn't express to full-sized desktop and server-grade systems, just mobile and low-ability devices as well. To cite a single mainstream example, the Apple Watch Series 7 touts a dual-cadre CPU. Considering this is a small device that wraps around your wrist, it shows just how important design innovations help raise the functioning of computers.

On the desktop side, taking a look at contempo Steam hardware surveys can tell usa how much multi-core CPUs dominate the PC market. Over 70% of Steam users take a CPU with 4 or more than cores. Merely before nosotros delve any deeper into the focus of this article, it should be appropriate to define some terminology and even though nosotros're limiting the telescopic to desktop CPUs, most of the things we discuss equally apply to mobile and server CPUs in different capacities.

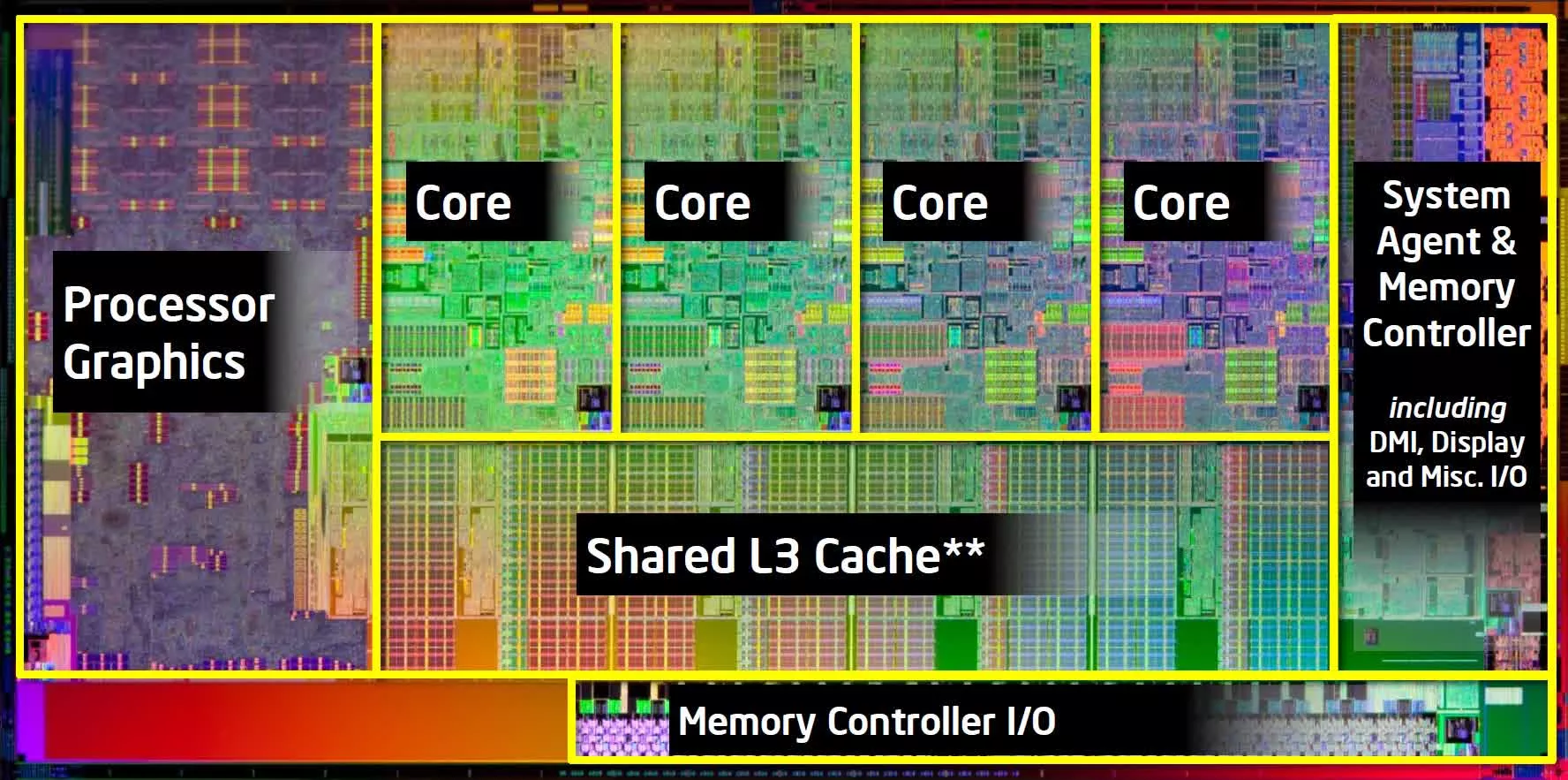

First and foremost, let'due south define what a "core" is. A cadre is a fully self-contained microprocessor capable of executing a calculator programme. The core usually consists of arithmetic, logic, command-unit besides as caches and data buses, which permit it to independently execute program instructions.

The multi-core term is simply a CPU that combines more than ane cadre in a processor package and functions every bit ane unit. This configuration allows the private cores to share some mutual resources such every bit caches, and this helps to speed up program execution. Ideally, you'd await that the number of cores a CPU has linearly scales with functioning, but this is usually non the case and something we'll discuss later in this commodity.

Another aspect of CPU pattern that causes a scrap of defoliation to many people is the distinction between a physical and a logical core. A physical core refers to the concrete hardware unit that is actualized past the transistors and circuitry that make upwardly the cadre. On the other hand, a logical cadre refers to the independent thread-execution power of the core. This behavior is made possible by a number of factors that become beyond the CPU core itself and depend on the operating organisation to schedule these procedure threads. Another important gene is that the program beingness executed has to exist developed in a way that lends itself to multithreading, and this can sometimes be challenging due to the fact that the instructions that brand upward a single program are hardly independent.

Moreover, the logical core represents a mapping of virtual resources to physical cadre resources and hence in the consequence a physical resource is existence used by i thread, other threads that require the same resource take to exist stalled which affects functioning. What this means is that a unmarried concrete core tin can be designed in a fashion that allows information technology to execute more than one thread concurrently where the number of logical cores in this case represents the number of threads it tin can execute simultaneously.

Virtually all desktop CPU designs from Intel and AMD are limited to two-way simultaneous multithreading (SMT), while some CPUs from IBM offer upward to eight-way SMT, but these are more than often seen in server and workstation systems. The synergy between CPU, operating system, and user application programme provides an interesting insight into how the development of these contained components influence each other, only in order not to be sidetracked, we'll leave this for a future article.

Earlier multi-core CPUs

Taking a brief look into the pre-multi-cadre era will enable us to develop an appreciation for just how far we take come. A unmarried-core CPU equally the name implies normally refers to CPUs with a single physical core. The earliest commercially available CPU was the Intel 4004 which was a technical curiosity at the time information technology released in 1971.

This 4-bit 750kHz CPU revolutionized not just microprocessor pattern but the unabridged integrated circuit manufacture. Around that same fourth dimension, other notable processors like the Texas Instruments TMS-0100 were developed to compete in similar markets which consisted of calculators and control systems. Since so, processor operation improvements were mainly due to clock frequency increases and information/address bus width expansion. This is axiomatic in designs like the Intel 8086, which was a unmarried-core processor with a max clock frequency of 10MHz and a 16-flake data-width and 20-bit address-width released in 1979.

Going from the Intel 4004 to the 8086 represented a ten-fold increase in transistor count, which remained consistent for subsequent generations every bit specifications increased. In addition to the typical frequency and data-width increases, other innovations which helped to better CPU operation included dedicated floating-point units, multipliers, as well as general educational activity ready compages (ISA) improvements and extensions.

Continued research and investment led to the first pipelined CPU pattern in the Intel i386 (80386) which allowed it to run multiple instructions in parallel and this was achieved past separating the pedagogy execution flow into distinct stages, and hence as ane instruction was being executed in i stage, other instructions could be executed in the other stages.

The superscalar architecture was introduced as well, which can be thought of as the precursor to the multi-cadre design. Superscalar implementations duplicate some instruction execution units which allow the CPU to run multiple instructions at the aforementioned time given that there were no dependencies in the instructions existence executed. The earliest commercial CPUs to implement this technology included the Intel i960CA, AMD 29000 series, and Motorola MC88100.

One of the major contributing factors to the rapid increase in CPU performance in each generation was transistor technology, which allowed the size of the transistor to be reduced. This helped to significantly decrease the operating voltages of these transistors and allowed CPUs to cram in massive transistors counts, reduced scrap area, while increasing caches and other defended accelerators.

In 1999, AMD released the now archetype and fan-favorite Athlon CPU, striking the mind-boggling 1GHz clock frequency months later, forth with all the host of technologies we've talked well-nigh to this betoken. The chip offered remarkable performance. Better however, CPU designers continued to optimize and innovate on new features such as branch prediction and multithreading.

The culmination of these efforts resulted in what'due south regarded equally one of the elevation single-core desktop CPUs of its time (and the ceiling of what could exist achieved in term of clock speeds), the Intel Pentium iv running up to 3.8GHz supporting two threads. Looking back at that era, most of usa expected clock frequencies to keep increasing and were hoping for CPUs that could run at 10GHz and across, merely one could alibi our ignorance since the boilerplate PC user was not as tech-informed as information technology is today.

The increasing clock frequencies and shrinking transistor sizes resulted in faster designs only this came at the cost of college power consumption due to proportional relation between frequency and ability. This power increase results in increased leakage electric current which does not seem like much of a problem when yous have a chip with 25,000 transistors, only with modern chips having billions of transistors, information technology does pose a huge problem.

Significantly increasing temperature tin cause chips to break down since the heat cannot be dissipated effectively. This limitation in clock frequency increases meant designers had to rethink CPU design if there was to exist whatsoever meaningful progress to be made in continuing the trend of improving CPU performance.

Enter the multi-core era

If we liken single-cadre processors with multiple logical cores to a single human with as many artillery equally logical cores, then multi-core processors will be similar a unmarried human with multiple brains and corresponding number of arms also. Technically, having multiple brains ways your ability to think could increase dramatically. Merely before our minds drift too far away thinking well-nigh the graphic symbol we just visualized, let's take a footstep back and await at one more than estimator design that preceded the multi-core design and that is the multi-processor organisation.

These are systems that have more than one concrete CPU and a shared master memory puddle and peripherals on a unmarried motherboard. Like most organisation innovations, these designs were primarily geared towards special-purpose workloads and applications which are characterized by what nosotros meet in supercomputers and servers. The concept never took off on the desktop front due to how badly its operation scaled for most typical consumer applications. The fact that the CPUs had to communicate over external buses and RAM meant they had to bargain with significant latencies. RAM is "fast" but compared to the registers and caches that reside in the core of the CPU, RAM is quite slow. Also, the fact that well-nigh desktop programs were not designed to take reward of these systems meant the price of edifice a multi-processor arrangement for home and desktop use was not worth it.

Conversely, because the cores of a multi-core CPU pattern are much closer and built on a single package they have faster buses to communicate on. Moreover, these cores have shared caches which are separate from their individual caches and this helps to improve inter-core communication by decreasing latency dramatically. In add-on, the level of coherence and cadre-cooperation meant performance scaled amend when compared to multi-processor systems and desktop programs could take better advantage of this. In 2001 we saw the first truthful multi-core processor released by IBM nether their Power4 compages and equally expected it was geared towards workstation and server applications. In 2005, however, Intel released its start consumer focused dual-core processor which was a multi-core design and later that aforementioned year AMD released their version with the Athlon X2 architecture.

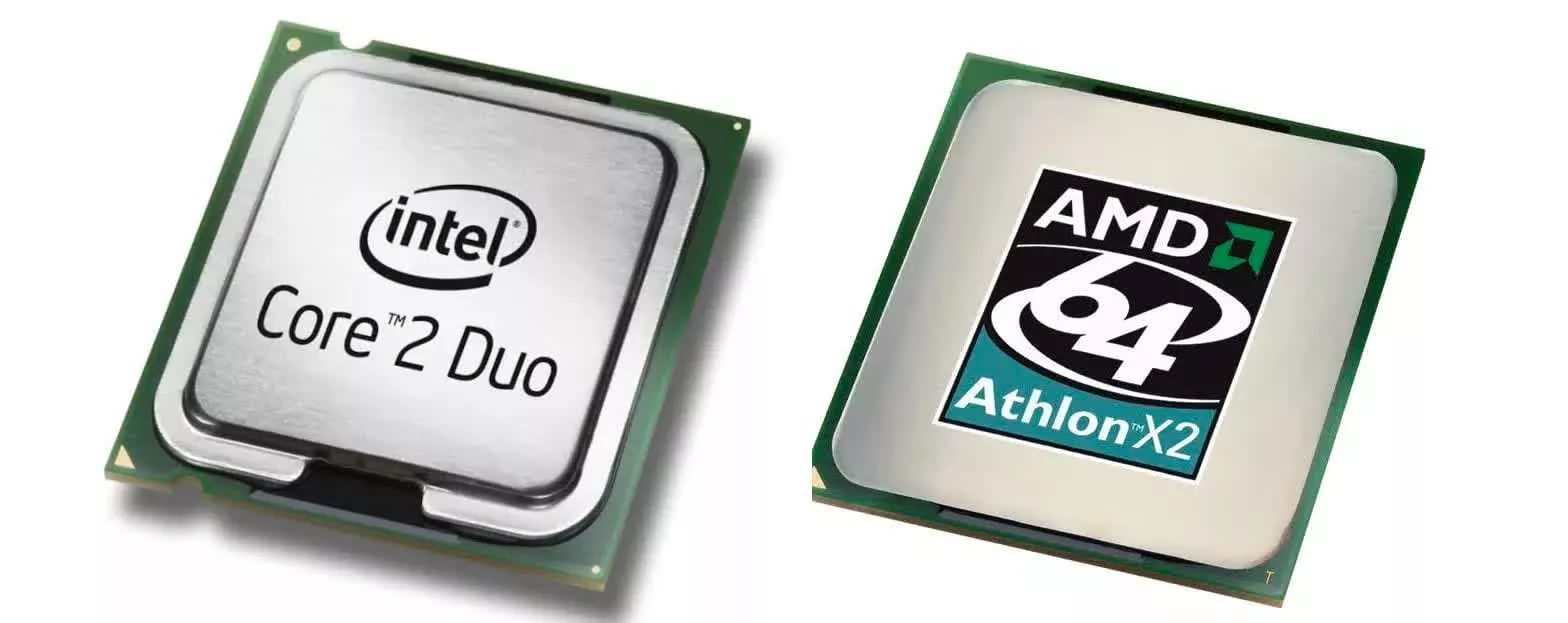

As the GHz race slowed down, designers had to focus on other innovations to improve the performance of CPUs and this primarily resulted from a number of design optimizations and full general architecture improvements. One of the key aspects was the multi-cadre design which attempted to increase cadre counts for each generation. A defining moment for multi-cadre designs was the release of Intel's Cadre 2 series which started out every bit dual-cadre CPUs and went upwardly to quad-cores in the generations that followed. Likewise, AMD followed with the Athlon 64 X2 which was a dual-cadre blueprint, and later the Phenom series which included tri and quad-core designs.

These days both companies send multi-core CPU series. The Intel 11th-gen Core series maxed out at 10-cores/twenty-threads, while the newer 12th-gen series goes up to 24 threads with a hybrid design that packs 8 performance cores that support multi-threading, plus 8 efficient cores that don't. Meanwhile, AMD has its Zen 3 powerhouse with a whopping 16 cores and 32 threads. And those core counts are expected to increase and also mix upward with large.LITTLE approaches every bit the 12th-gen Core family only did.

In improver to the core counts, both companies have increased cache sizes, cache levels too as added new ISA extensions and architecture optimizations. This struggle for total desktop domination has resulted in a couple of hits and misses for both companies.

Up to this point we accept ignored the mobile CPU space, but similar all innovations that trickle from 1 infinite to the other, advancements in the mobile sector which focuses on efficiency and functioning per watt, has led to some very efficient CPU designs and architectures.

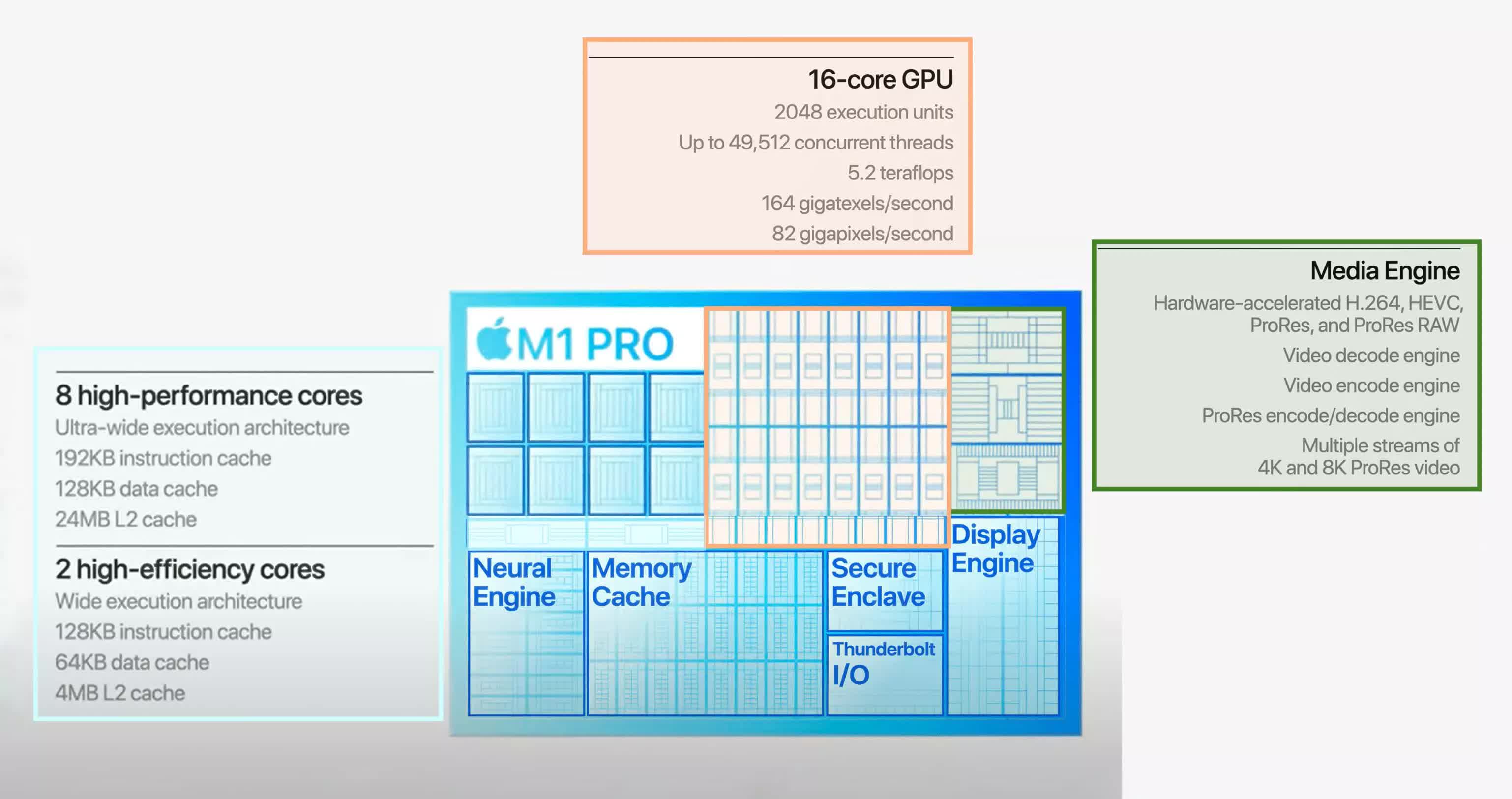

Every bit fully demonstrated by the Apple tree M1 chip, well designed CPUs can have both efficient power consumption profiles likewise as excellent performance, and with the introduction of native Arm support in Windows xi, the likes of Qualcomm and Samsung are guaranteed to make an effort to chip away some share of the laptop market.

The adoption of these efficient blueprint strategies from the low-power and mobile sector has non happened overnight, but has been the result of continued effort by CPU makers like Intel, Apple tree, Qualcomm, and AMD to tailor their chips to piece of work in portable devices.

What'due south adjacent for the desktop CPU

Just like the single-core architecture has become one for the history books, the aforementioned could be the eventual fate of today's multi-core architecture. In the interim, both Intel and AMD seem to exist taking different approaches to balancing operation and power efficiency.

Intel'southward latest desktop CPUs (a.thou.a. Alder Lake) implement a unique compages which combines high-functioning cores with high efficiency cores in a configuration that seems to exist taken directly out of the mobile CPU market, with the highest model having a high performance 8-core/16-thread in addition to a low-power eight-core function making a total of 24 cores.

AMD, on the other hand, seems to exist pushing for more cores per CPU, and if rumors are to be believed, the company is bound to release a whopping 32-core desktop CPU in their adjacent-generation Zen 4 architecture, which seems pretty believable at this betoken looking at how AMD literally builds their CPUs past grouping multiple core complexes, each have multiple number of cores on the same die.

Outside of rumors though, AMD has confirmed the introduction of what information technology calls 3D-V cache, which allows information technology to stack a large enshroud on meridian of the processor'southward core and this has the potential of decreasing latency and increasing performance drastically. This implementation represents a new form of bit packaging and is an surface area of research that holds much potential for the future.

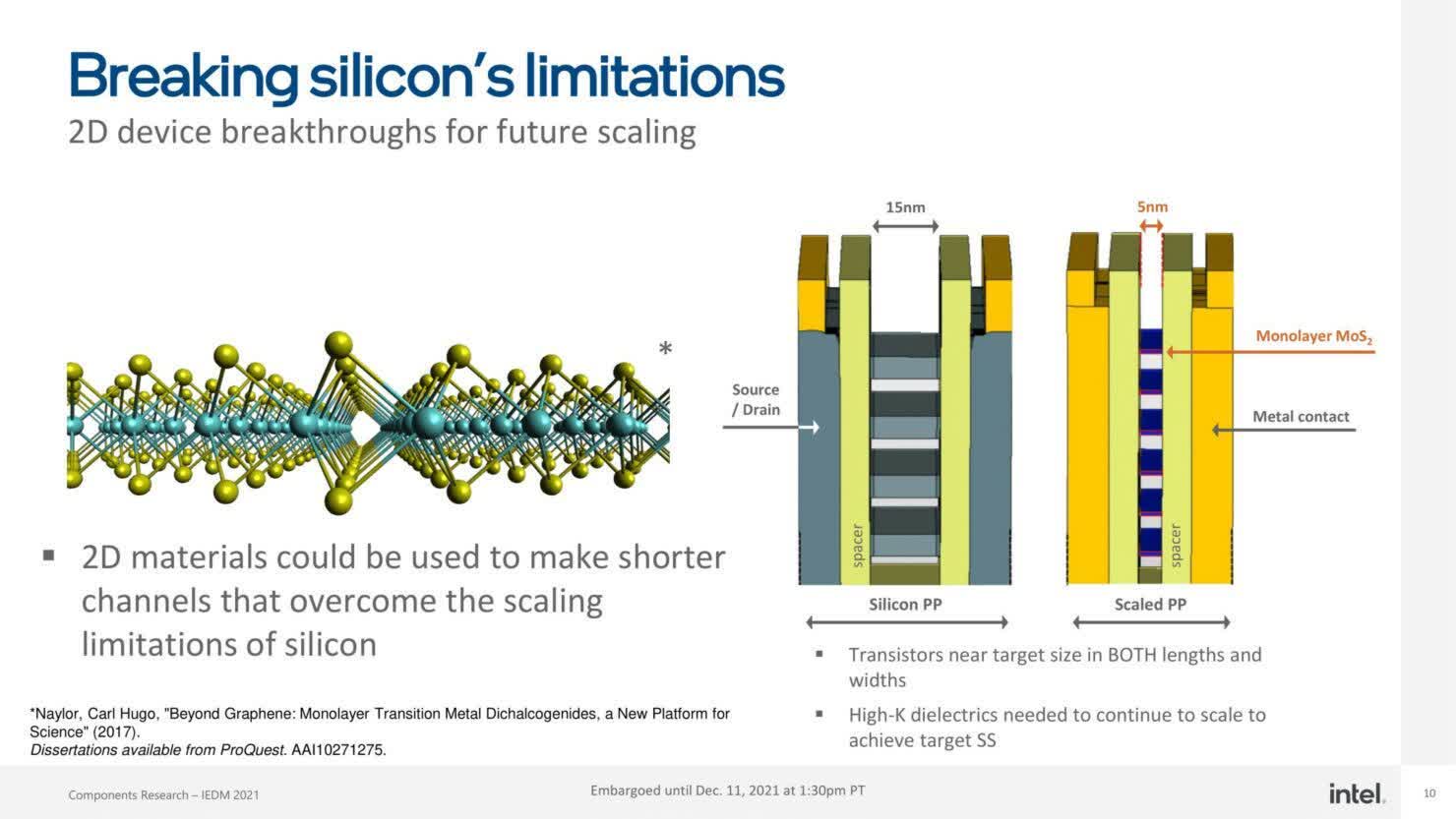

On the downside however, transistor engineering science as nosotros know it is nearing its limit as we continue to see sizes shrink. Currently, 5nm seems to be the cutting edge and even though the likes of TSMC and Samsung have announced trials on 3nm, we seem to be budgeted the 1nm limit very fast. As to what follows afterwards that, nosotros'll take to wait and come across.

For now a lot of endeavor is beingness put into researching suitable replacements for silicon, such as carbon-nanotubes which are smaller than silicon and can help keep the size-compress on-going for a while longer. Another expanse of research has to do with how transistors are structured and packaged into dies, like with AMD's Five-cache stacking and Intel's Foveros-3D packaging which can go a long way to improve IC integration and increase functioning.

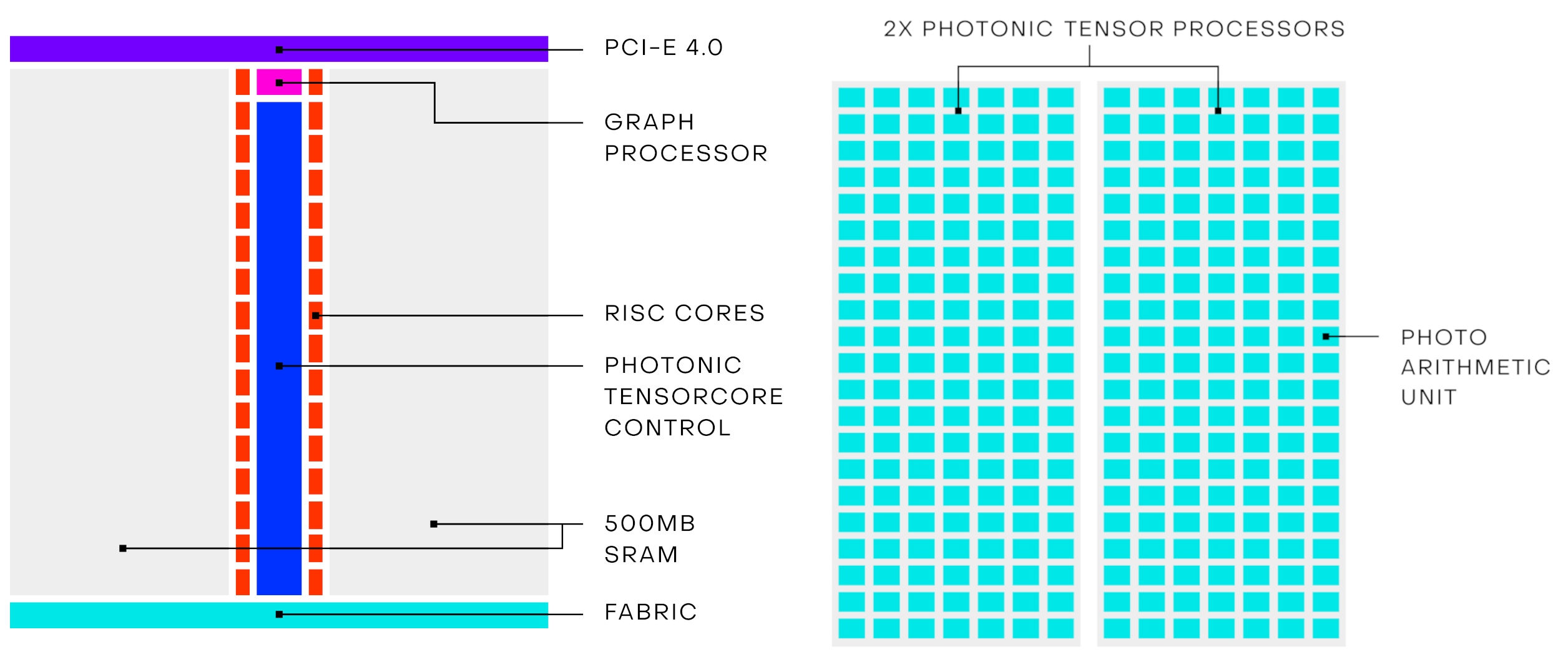

Another surface area that holds hope to revolutionize computing is photonic processors. Unlike traditional semiconductor transistor technology that is congenital around electronics, photonic processors use light or photons instead of electrons, and given the backdrop of light with its significantly lower impedance advantage compared to electrons which have to travel through metal wiring, this has the potential to dramatically better processor speeds. Realistically, we may be decades away from realizing complete optical computers, but in the next few years we could well see hybrid computers that combine photonic CPUs with traditional electronic motherboards and peripherals to bring about the performance uplifts nosotros desire.

Lightmatter, LightElligence and Optalysys are a few of the companies that are working on optical computing systems in one form or another, and surely there are many others in the groundwork working to bring this technology to the mainstream.

Another popular and yet dramatically different computing image is that of breakthrough computers, which is still in its infancy, but the corporeality of research and progress being made there is tremendous.

The commencement 1-Qubit processors were announced non besides long ago and however a 54-Qubit processor was announced past Google in 2022 and claimed to accept achieved quantum supremacy, which is a fancy fashion of saying their processor can exercise something a traditional CPU cannot do in a realistic amount of time.

Not to be left outdone, a squad of Chinese designers unveiled their 66-Qubit supercomputer in 2022 and the race keeps heating upwards with companies like IBM announcing their 127-Qubit quantum-computing chip and Microsoft announcing their ain efforts to develop breakthrough computers.

Fifty-fifty though chances are you won't exist using whatever of these systems in your gaming PC anytime presently, there's e'er the possibility of at to the lowest degree some of these novel technologies to make information technology into the consumer infinite in one course or another. Mainstream adoption of new technologies has generally been one of the ways to drive costs down and pave the way for more than investment into amend technologies.

That's been our brief history of the multi-core CPU, preceding designs, and frontward looking paradigms that could replace the multi-cadre CPU as we know it today. If you lot'd like to swoop deeper into CPU technology, check out our Anatomy of the CPU (and the entire Anatomy of Hardware series), our series on how CPUs work, and the total history of the microprocessor.

Source: https://www.techspot.com/article/2363-multi-core-cpu/

Posted by: morrisgonstornes.blogspot.com

0 Response to "A Brief History of the Multi-Core Desktop CPU"

Post a Comment